Reuse tracking

RDF metadata presents information about a work's ancestry in a machine readable way. The websites of users who properly used our license chooser tool already have this setup. While it is possible to trace backwards to find a derived work's source, it is impossible to trace forwards to find all of a source work's derivatives without the aid of extra infrastructure.

In this page, you will find proposals for several ethical (respects user's privacy, does not involve radio-tagging people with malware or drm) solutions to this problem. These may either be systems that Creative Commons would prototype with the intention of being a reference for other organizations to build their own infrastructure; or systems that we would build and maintain our self, and provide an api to interested parties (either free in the spirit of open, or for a small fee to help offset hosting costs). All of the proposed systems below have their own advantages and disadvantages; none of them the silver bullet.

Proposal 1: Independent Refback Tracking

When a user opens a webpage, the browser sends some information to the server. Of particular interest is the /referrer string/. To put it simply, the referrer string contains the URL of the webpage that linked the user to the page they are currently viewing.

The Refback Tracking framework would be hosted by respective content providers, and served independently from CC. This advantage means that once a working system is prototyped, it would have no hosting (potentially none). cost for us, and therefor require the minimal amount of maintenance.

The disadvantage to this approach is it is only able to trace direct remixes of a work, but not remixes of remixes.

Here is how it works:

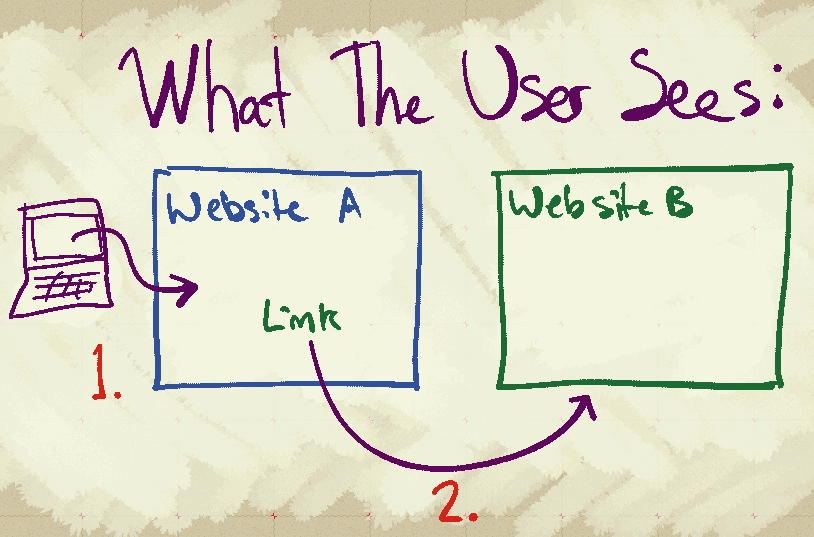

The above picture describes the sequence of events that triggers the tracking mechanism, shown from the user's perspective. The steps are like so:

1. The user opens Website A. Website A contains a remixed work. The work provides proper attribution to the work which it is derived from, both visually for the user and invisibly with metadata.

2. The curious user clicks on the link to the original work, and is taken to Website B as expected.

Here is what happens behind the scenes:

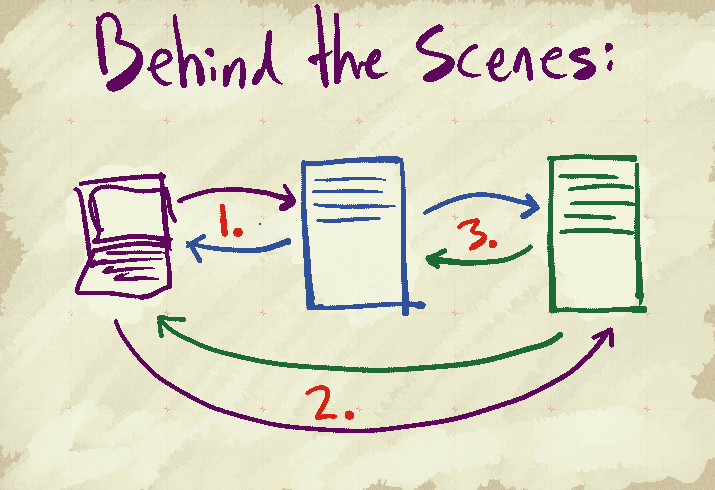

1. The user opens a website. The user's browser requests a page from a server. The website has a remixed work on it, and is attributed with metadata. The server replies to the user's request with the website.

2. The curious user clicks on the link to the original work. The user's browser sends a request to the server hosting the page of the original work. This request's referrer string contains the url of the webpage with the remixed work on it. The server replies to the user's request as expected, and takes note of the url in the referrer string (this can happen either using javascript embedded in the page, or with special code running on the webserver itself).

3. The server hosting the original work downloads the page of the remixed work (as noted from the referrer string). The server of the remixed work replies as expected. The server hosting the original work reads the metadata on the download page to verify that it indeed contains a remixed of the original work. The server notes the url in a database, to be used for generating reuse statistics.

Proposal 2: Hosted Refback Tracking

Hosted Refback Tracking is a variation of the system described in the first proposal. In this version, Creative Commons hosts the database server. The page which contains the work that is the target of re-use tracking will include a small bit of JavaScript, which CC would provide. This approach requires no changes to the 3rd party servers. Our license chooser could include an opt-in option, which would automatically add the JavaScript to the HTML+rdfa license mark.

This approach requires that CC host a service, thus losing the main advantage of the first proposal. However, this version is easier for content creators to opt-in, as it requires no modifications to 3rd party web servers. If this service sees widespread use, the aggregate data could potential construct a more robust graph than that described in the first proposal. However, the opt-in nature of this service makes this unlikely.

Here is how it works:

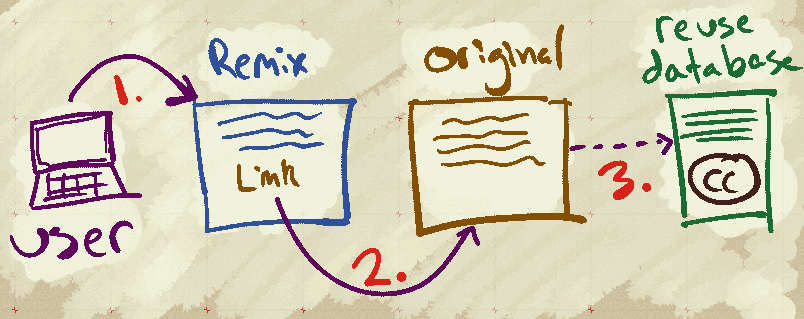

1. The user opens a website. The website contains a remixed work, which is attributed properly and contains the relevant metadata.

2. The user clicks on the link to the original work; the corresponding web page opens in the user's browser.

3. When the page loads, a script on the page sends the referrer information to the database server run by CC.

(4.) The database server reads the metadata from both websites, and if one is indeed a remix of the other, then this information about both sites is recorded in our database.

Proposal 3: Hosted Scraped Data API

Creative Commons already has two pieces of infrastructure set up that could be adapted to collect reuse tracking information. These components are the license badges (when the HTML provided by the license chooser is used), and the deed scraper (a tool that is used to show attribution information on the deed page when the user is linked there from another site). We currently use the license badges to estimate the usage statistics for our license. Both the license badges and deed scraper use referrer information to their work.

In this proposal, when a license badge is downloaded, the referrer URL is stored in a queue. While the queue is not empty, a service periodically selects a URL from the queue, downloads the page, and reads the metadata on the page. If the metadata indicates a source work URL, that page would be downloaded and read as well.

This information would allow us to build a bi-directional graph of usage data, which could be made accessible to 3rd parties via an API or a dashboard application.

Of the three proposals on this page, this one features the most aggressive data collection scheme (and therefor by far the most complete view of usage information). This scheme would also have the most demanding server load, and like the Hosted Refback Tracking proposal, would require ongoing maintenance from the tech team.

This scheme requires no modification to 3rd party websites to work, is completely invisible to the end user, and can be built by extending our existing infrastructure.

Here is how it works:

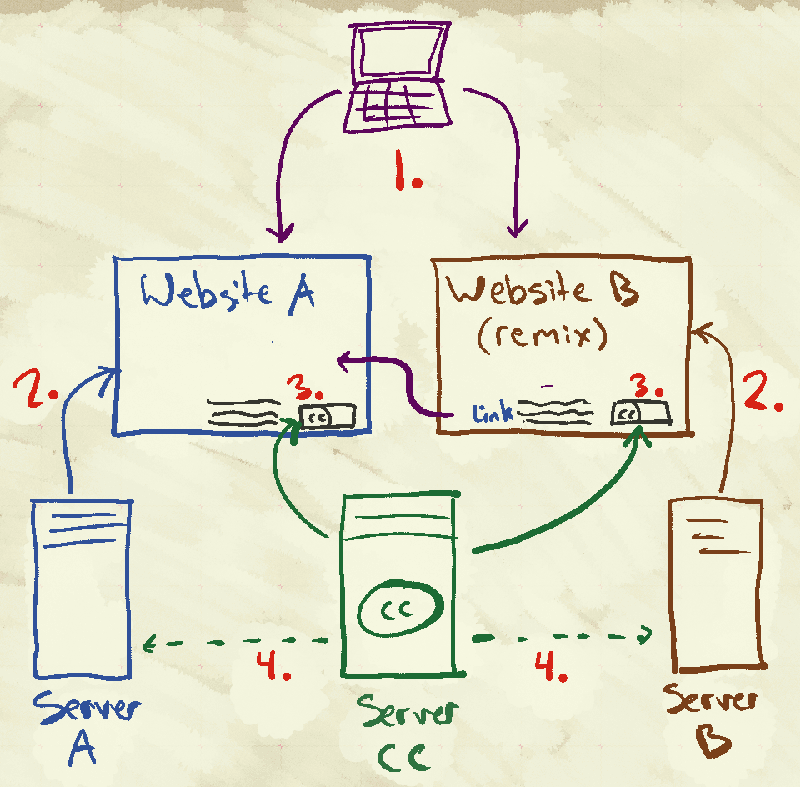

1. User requests a webpage (either one)

2. The respective server responds to the user's request with the expected files, except for...

3. The license badge is (usually) served directly by CC. This allows us to build estimated adoption of the different licenses (and of which versions)

4. On request of a license badge, a CC server could then read the metadata from the corresponding webpage, and use that to maintain a robust graph of re-use information, using metadata.

Note that steps one through three are how things already are. Some of our existing infrastructure could be adapted to provide step four, making this proposal fairly easy to implement